In the software development world, Software Composition Analysis (SCA) was a best practice before March 2021. After the White House “Executive Order on Improving the Nation’s Cybersecurity“, SCA became a business and regulatory imperative.

While the Executive Order does not explicitly mention “Software Composition Analysis”, SCA is the software industry term that covers the processes and tools needed to improve software supply chain integrity and address many other risks.

Even without a governmental directive, SCA is imperative for modern software development – for both proprietary and open source software. A common misconception is that SCA is simply scanning a codebase to identify components. While scanning is important, it is only one of many important processes encapsulating SCA.

This blog post outlines the fundamentals of Software Composition Analysis and the steps to implement SCA in an organization.

What is SCA?

Software Composition Analysis (SCA) is a set of processes and tools to evaluate and manage software from a component perspective. The term originated from industry analysts.

There are eight essential elements to SCA:

- Software Component Identification

- Dependency Management

- Software Bills of Materials

- License Identification

- Vulnerability Reporting

- Code Quality Reporting

- Community Health Reporting

- SCA Management

If you use third-party components in your own software (and everyone does), you need to know:

- Which components and where they come from (provenance)

- The license terms and how those apply to your use of a component

- Any component vulnerabilities and whether/how they apply to your use of a component

- The code quality of the component

- How the component is developed and built including any dependencies

- How you use the component internally or externally

SCA is usually discussed as only applying to open source software, but it applies equally to proprietary software. Most modern proprietary software embeds open source software, or requires open source software to run, and proprietary software is also subject to as many licensing, vulnerability and quality issues as open source software, if not more. The key challenge is that you will probably not have the SCA data for proprietary software unless you demand it from your supplier.

Defining a component versus a package is extremely variable in general usage and depends on your position in a software supply chain. The evolving best practice is to say:

- “Component” is the high-level name that may apply to an open source project or sub-project or a product.

- “Package” is a file that contains software that you can install. The name of a package will normally have a specific version that can be represented with a Package URL (PURL).

1. Software Component Identification

The foundation for all SCA processes is identifying third-party software components in use and those that may be used in the future. There are several techniques for identifying software components:

- Scanning a codebase to identify components (packages), based on the structure of the codebase, the content of the files and any available metadata. In this scenario, you will often be able to identify license information from the files in the codebase.

- Downloading a package. In this scenario, you know the package because you are choosing it from some repository, but it is critical to track that origin and relevant metadata for the package from the repository.

- Analyzing package dependencies. In this scenario, you identify packages that you will need from a package manifest file such as package.json or package-lock.json for an npm package or Node module. The package manifest file may contain license and other SCA data depending on the type of package. If the package manifest does not contain license data, you will normally need to look up the license from the package repository.

- Matching files in your codebase to an external repository based on a digital fingerprint (e.g., SHA-1 or SHA-256). In this scenario, the information obtained about the component is limited to what is available from the repository.

The primary technique to identify a component has a major impact on what else you will need to do to get the license, vulnerability or quality data.

Scanning usually provides the most SCA data from a single step, but that depends on how much data is stored in the codebase files. The biggest difference is that source files normally contain more SCA data and binaries contain less. The SCA data obtained from Matching depends on the resilience of the matching technique and the quality of the data offered in the external repository. Exact matching for digital fingerprints is notoriously brittle in any use case where source code is compiled and linked, like binaries based on C/C++ source code.

The hardest problem in component identification is identifying the contents of binary files (like C/C++ or Go) or “packed” files (like Web-packed or minified JavaScript). In these cases, tracing tools and data can identify the corresponding source code. This typically requires access to relevant build artifacts created from standard build tooling or specialized build tracing tools.

In general, software component identification requires a combination of scanning, matching, and tracing techniques or processes where the configuration of techniques depends on the languages, frameworks or platforms in use. It is extremely common to have multiple configurations for a product or product module, such as a product with a Java, C/C++ or Python backend, a JavaScript Web frontend and native mobile apps.

2. Dependency Management

Dependency management is a blessing and curse for modern software development. When selecting a component to use in a product or project, you are likely inheriting dozens of dependencies on other components (or more). These dependencies may or may not be required for your use case and are often recursive. In any case, you need to know what these are and you need to know the license, vulnerability, quality and other attributes of any component that you inherit from component dependencies.

The key point is to always think about a software component in use as the sum of the component and its dependencies. The techniques for identifying dependencies and their attributes are similar across package ecosystems such as Maven, npm or PyPI, but the tools are usually different for each major type of package, according to the type and format of metadata used in each type of package manifest. The resolution of package dependencies is particularly complex for a package ecosystem where there are multiple types of package metadata. A good example is the Python package ecosystem. See python-inspector for an example of this complexity and how to resolve it.

3. Software Bills of Materials (SBOMs)

The hottest topic in SCA since 2021 is the importance of Software Bills of Materials. See nexB on Software Bill of Materials and Software Composition Analysis for background on this development. It is highly unlikely that there will be a single standard for SBOMs, but it is certain that all software producing or consuming organizations will use SBOM data as the primary anchor for other SCA data. A simple analogy to the use of BOMs and components in manufacturing demonstrates how SBOMs are essential for managing your use of software components.

4. License Identification

The primary techniques for software component license identification are:

- Scanning to directly identify license assertions or clues from codebase files or package metadata.

- Matching to look up license information from an external source.

The complexity of finding and validating component license information is highly variable depending on the clarity of the information provided by the software authors.

Key considerations for license identification are:

- A single component may have files or subcomponents under dozens or more different licenses.

- The applicable license may depend on how the component or the set of subcomponents are used.

- Software component licenses are generally stable over long periods of time so the invested effort to curate a complex component license is usually reusable for later versions of the same component.

License Clarity Scoring is one approach to provide users with a confidence level regarding scan results, based on a set of criteria that indicate how clearly, comprehensively and accurately a software project has defined and communicated the licensing that applies to the project software. See License Clarity Scoring in ScanCode for more information.

5. Vulnerability Reporting

Software vulnerability reporting may be the hardest part of software composition analysis because the data is so volatile. When identifying a package-version using PURL, the first step is to investigate whether there are any known vulnerabilities and whether those vulnerabilities have a known fix (which usually entails upgrading to a later package-version). The challenge is that information about software vulnerabilities and fixes often change on a daily basis. To manage vulnerability data, keep a robust internal BOM of the software components for each product and refresh the vulnerability data on a daily or weekly basis.

Another challenge is finding high quality vulnerability data for components. This is difficult because:

- Most vulnerability databases are proprietary and can incur significant costs for access.

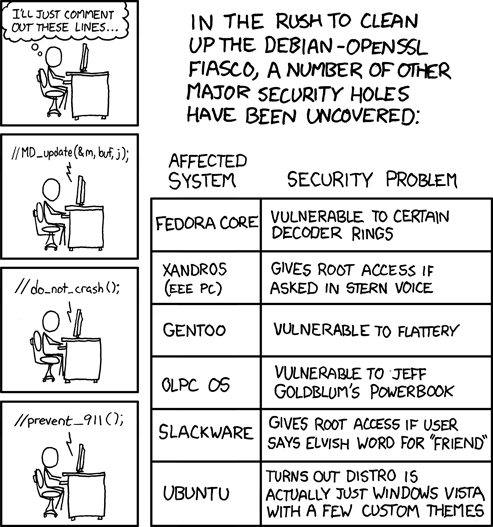

- There are some prominent open databases such as the NVD (National Vulnerability Database from NIST), but the most current vulnerability data may be distributed across many sources, each with its own reporting format. Examples are GitHub, RedHat and many, many individual FOSS projects. VulnerableCode is a new free and open vulnerability database that aggregates and improves vulnerability data from many sources.

- There are many false positive vulnerabilities. VulnTotal addresses this by cross-validating the vulnerability coverage across publicly available vulnerability checking tools and databases.

- The exploitability and impact of a vulnerability varies widely according to how a component is used.

6. Code Quality Reporting

The need to understand code quality is not unique to FOSS components, but with FOSS components, the advantage is public access to the source code. Component authors may provide code quality data for a project, but FOSS or proprietary tools can also perform assessments of component code quality. These tools are typically different for different platforms and languages.

7. Community Health Reporting

Community or project “health” data is available for any FOSS project, except for when a company posts only code releases under a FOSS license without any external visibility into issues or code changes and commits. It is easy to obtain basic community health data from a project repository on GitHub (like the number of stars) or another platform, but it can be challenging to organize this data for analysis. The CHAOSS Project created metrics, models and software to measure the Community Health of a FOSS project. If you have a well-defined database of the components you use and their provenance, tools like GrimoireLab from the CHAOSS project can provide actionable information about community health for a component or a set of components from the same project.

8. SCA Management

Given the broad array of data and processes needed to handle SCA, it is no surprise that a robust system is needed to store SCA data, implement policies and provide analytics. It may be useful to distribute some elements of SCA data in existing software development repositories, such as storing validated license data with code, but this will not work for dynamic data like vulnerabilities. The broader issue is that you need an SCA system that can be easily shared among Business, Legal and Technical teams. For example, your Legal team will need a system where they can define your license policies without requiring Legal staff to use your software development systems.

The best solution is to integrate a purpose-built enterprise-level SCA Management system with your software development systems via APIs. In this scenario, the SCA Management system is where you set policies, store component and SBOM master data and track compliance. Within your software development environment, you can use team-level SCA tools for specific functions like license scanning or tracking vulnerabilities on a regular schedule, such as per commit, daily, or weekly, with API access to your SCA Management system for policies, storing your master data and consuming or generating SBOMs.

DejaCode is an example of a complete enterprise-level SCA Management application. As a system of record for SBOMs, DejaCode automates open source license compliance and ensures software supply chain integrity, enabled by ScanCode and VulnerableCode.

How to implement SCA

The first step for implementing SCA for an organization (or some element of your organization depending on the size) is to define objectives and policies. These are likely to change quite a bit as you go through the real-world process of implementing SCA but you should start with clear objectives:

- Do we need SCA for internal or external customers? Or both?

- What regulatory obligations do we have?

- What are the target benefits for our organization?

In order to define these SCA objectives, a cross-functional group needs to convene, covering at least 3 perspectives:

- Business: What are business objectives? This will typically include customer requirements, internal controls and cost.

- Legal: What are the legal risks we need to address? This will typically include licensing risks and regulatory requirements.

- Technical: What are the benefits and risks for the software development organization? This will typically include velocity, quality and security.

In large organizations, this cross-functional group is often called an Open Source Program Office (OSPO), but for a smaller organization, it may only require a steering committee or similar group. The key point for this group is to define your SCA objectives and policies and determine who will be responsible for SCA implementation.

Historically, Legal led organizing what we now call SCA because of the prominence of software licensing policies for FOSS. In some cases, a Security team took the lead, especially if a startup was more worried about security than licensing. With the current velocity and volume of FOSS packages in use for modern software development, it is more practical for the software development organization to lead, because they will need to implement automation within their processes to have any realistic chance of implementing SCA policies and achieving SCA objectives. The Business, Legal and Security groups are usually not close enough to the actual work to be done to take the lead.

Once the objectives have been set, you are ready to look at your platform(s), framework(s) and overall software environment to determine which tools to use. That decision is heavily influenced by how many different technologies you use and how you use them. It may be that you need to make some changes in your software development processes to accommodate SCA tools. This may be a good thing if your process was not structured or standardized enough.

Why build FOSS tools for SCA?

nexB’s philosophy is to build the FOSS tools needed for FOSS SCA. The ability to reliably reuse software components is fundamental to all modern software development.

When nexB started working on SCA, we knew that it was imperative to have open source tools to manage open source software. We built our first FOSS tools under the name AboutCode, and fostered a large community with contributions from open source developers, leading companies, and students.

We started with ScanCode to handle the identification and licensing of software components. While developing ScanCode, we identified the urgent need for a “mostly universal” package identifier, which we addressed with the invention of Package URL (PURL). PURL quickly attracted interest from key package ecosystem leaders who added software to implement PURLs for 9 major package types (more coming). The PURL specification was the starting point, but the fast development of supporting software was the key to rapid adoption by package ecosystems, ISVs, CycloneDX and SPDX.

As we continued to build robust SCA tools, we recognized the need for an open source vulnerability database, one based on open data and FOSS tools. VulnerableCode offers vulnerability reporting and data as a free service. AboutCode’s newest major project is PurlDB, which features a reference database of packages and their metadata with MatchCode tools for exact and fuzzy matching of software components to known packages. We have also developed over 20 other FOSS SCA tools and libraries with more to come.

Our next blog post in this series will be an in-depth discussion of free and open source tools available for Software Composition Analysis.